large language model

Our editors will review what you’ve submitted and determine whether to revise the article.

- Related Topics:

- artificial intelligence

- ChatGPT

- algorithm

- natural language processing

Recent News

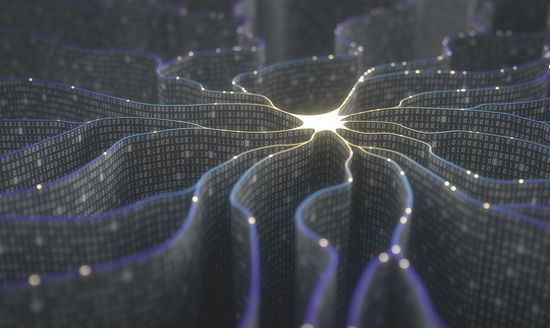

large language model (LLM), a deep-learning algorithm that uses massive amounts of parameters and training data to understand and predict text. This generative artificial intelligence-based model can perform a variety of natural language processing tasks outside of simple text generation, including revising and translating content.

Underlying mechanisms

The word large refers to the parameters, or variables and weights, used by the model to influence the prediction outcome. Although there is no definition for how many parameters are needed, LLM training datasets range in size from 110 million parameters (Google’s BERTbase model) to 340 billion parameters (Google’s PaLM 2 model). Large also refers to the sheer amount of data used to train an LLM, which can be multiple petabytes in size and contain trillions of tokens, which are the basic units of text or code, usually a few characters long, that are processed by the model.

LLMs aim to produce the most probable outcome of words for a given prompt. Smaller language models, such as the predictive text feature in text-messaging applications, may fill in the blank in the sentence “The sick man called for an ambulance to take him to the _____” with the word hospital. LLMs function in the same way but on a much larger, more nuanced scale. Instead of predicting a single word, an LLM can predict more-complex content, such as the most likely multi-paragraph response or translation.

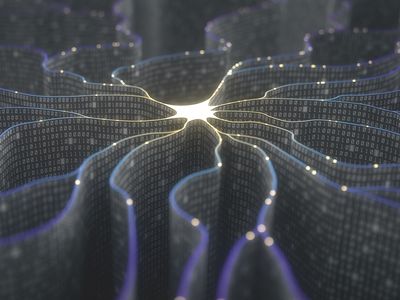

An LLM is initially trained with textual content. The training process may involve unsupervised learning (the initial process of forming connections between unlabeled and unstructured data) as well as supervised learning (the process of fine-tuning the model to allow for more targeted analysis). Once training is complete, LLMs undergo the process of deep learning through neural network models known as transformers, which rapidly transform one type of input to a different type of output. Transformers take advantage of a concept called self-attention, which allows LLMs to analyze relationships between words in an input and assign them weights to determine relative importance. When a prompt is input, the weights are used to predict the most likely textual output.

History

The first language models, such as the Massachusetts Institute of Technology’s Eliza program from 1966, used a predetermined set of rules and heuristics to rephrase users’ words into a question based on certain keywords. Such rule-based models were followed by statistical models, which used probabilities to predict the most likely words. Neural networks built upon earlier models by “learning” as they processed information, using a node model with artificial neurons. Nodes were activated based on other nodes’ output.

The first large language models emerged as a consequence of the introduction of transformer models in 2017. The new speeds provided by transformers allowed for even more parameters and data to be incorporated into models, paving the way for the introduction of the first LLMs, which included Google’s BERT (Bidirectional Encoder Representations from Transformers) and OpenAI’s GPT (Generative Pre-trained Transformer), the following year.

LLMs improved their task efficiency in comparison with smaller models and even acquired entirely new capabilities. These “emergent abilities” included performing numerical computations, translating languages, and unscrambling words. LLMs have become popular for their wide variety of uses, such as summarizing passages, rewriting content, and functioning as chatbots. Some LLMs are even able to generate captions for inputted images.

LLMs can be used by computer programmers to generate code in response to specific prompts. Additionally, if this code snippet inspires more questions, a programmer can easily inquire about the LLM’s reasoning. Much in the same way, LLMs are useful for generating content on a nontechnical level as well. LLMs may help to improve productivity on both individual and organizational levels, and their ability to generate large amounts of information is a part of their appeal.

Complications and concerns

LLMs have a number of drawbacks. The models are incredibly resource intensive, sometimes requiring up to hundreds of gigabytes of RAM. Moreover, their inner mechanisms are highly complex, leading to troubleshooting issues when results go awry. Occasionally, LLMs will present false or misleading information as fact, a common phenomenon known as a hallucination. A method to combat this issue is known as prompt engineering, whereby engineers design prompts that aim to extract the optimal output from the model.

Numerous ethical and social risks still exist even with a fully functioning LLM. A growing number of artists and creators have claimed that their work is being used to train LLMs without their consent. This has led to multiple lawsuits, as well as questions about the implications of using AI to create art and other creative works. Models may perpetuate stereotypes and biases that are present in the information they are trained on. This discrimination may exist in the form of biased language or exclusion of content about people whose identities fall outside social norms. Other issues outlined by experts include information hazards, wherein LLMs may disclose private information present in training data; malicious use, wherein bad actors use the models to bolster disinformation campaigns or commit fraud; and economic harms, in which LLMs may displace workers and widen inequality gaps between those with access to the technology and those without such access.

Michael McDonough